Civic Tech Field Guide

Sharing knowledge and productively growing the fieldThe Tech > Emerging tech > Artificial Intelligence - (359)

Technologies that train models to autonomously support or function independently of direct human effort.

Suggested resources:

- Civic AI Prompt Library

- AI features in digital participation platforms, by Matt Stempeck

- The threat and promise of generative AI in the regulatory process.

- AI and the humanitarian sector: hype or hope? 🔊

- A round-up of internal AI policies

- CivicSpace.tech guide to Artificial Intelligence & Machine Learning, including its relevance to civic space, opportunities, risks, and case studies

- Handshake's Generative AI Resource Hub, a curated list of research, instructions, and projects from Handshake on generative AI.

- Watch: Assessing Responsible AI Use Cases in Local Government, by Open North

- Foundation models in the public sector, by the Ada Lovelace Institute

- Artificial Intelligence: Its potential and ethics in the practice of public participation. A Research Paper by the International Association for Public Participation Canada.

Showing 359 Results

This database by Mozilla Foundation maps intersections between the key social justice and human rights areas of our time and documented AI impacts and their manifestations in society.

Throughout 2024, Rest of World is tracking the most noteworthy incidents of AI-generated election content globally.

AI4Democracy

Madrid (Spain)The Center for the Governance of Change at IE University and Microsoft launched AI4Democracy, a global initiative that seeks to create knowledge and mobilize democratic governments, companies and civil society to promote action for a responsible use of artificial intelligence to defend and strengthen democracy.

Rentervention

Chicago, ILA service of the Law Center for Better Housing (LCBH), here to help Illinois tenants facing housing issues

8-month pilot to test 12 computer-vision sensors across four boroughs of NYC to employ machine vision and improve street-level data collection and improve planning decisions

Maru

LondonMaru is a chatbot that helps you tackle online harassment

CivicSearch is a search and discovery tool for everything that is said in local government meetings, texts, and laws. It covers 547 cities and towns in the US and Canada.

citymeetings.nyc

New York CityA tool that makes it easy to navigate and research government meetings. It uses LLMs to create granular chapters that readers can skim and share.

Climate Intelligence (CLINT)

EuropeThe CLIMATE INTELLIGENCE – alias CLINT – project is a European-funded project whose main objective is to develop an Artificial Intelligence framework composed of Machine Learning techniques and algorithms to process big climate datasets for improving Climate Science...

Tangible AI

San Diego, CAWe are on a mission to help the social sector organizations to scale their impact, increase their outreach and work smarter using artificial intelligence and chatbots, and smart automation.

During our four-week MOOC, you will learn about and explore the fascinating and ever-evolving world of technology and democracy, in particular the impact of artificial intelligence on freedom of expression in elections.

Unboxed City

Massachusetts Institute of Technology (MIT)Art exhibition at MIT: critical explorations of ai and cities

Anthropic is an AI safety and research company that's working to build reliable, interpretable, and steerable AI systems.

Aspen Digital, a program of the Aspen Institute, is launching the AI Elections Initiative as an ambitious new effort to strengthen US election resilience in the face of generative AI.

COA Beat Assistant

Manila, Metro Manila, PhilippinesCustom GPT tool to help journalists in the Philippines to parse long government audit reports for anomalies

The AI Democracy Projects engage the implications of AI systems and tools, including predictive algorithms, machine learning, and frontier models, for democratic society.

Converlens

Melbourne VIC, AustraliaDiscover the modern engagement platform powered by natural language AI to help you gather feedback, manage your data and understand what is being said.

Legitimate provides journalists and publishers with the latest [AI] tools to enhance their work while helping audiences build trust and have context in the content they consume.

Self-assessment and other resources to help entrepreneurs incorporate AI ethical principles into their technological solutions of entrepreneurs from seed stages to those who export their technologies to other markets.

MyCity Chatbot

New York City, NY, USANYC's Business Services Chatbot which "uses Microsoft's Azure AI services to provide information in response to questions you have about starting or operating your business" in ten+ languages

Global Observatory of Urban Artificial Intelligence

Barcelona, SpainIts goal is to promote research and disseminate best practice in the ethical application of artificial intelligence in cities.

City AI Connect

Johns Hopkins UniversityA global community for cities to learn about generative AI together, faster.

Camden Talks Data: Machine learning

Camden Town, LondonThis video explains how Camden Council uses machine learning to improve council services.

Farmer.CHAT

India (Bhārat)An AI Assistant by Gooey.AI and Digital Green to make vetted farmer knowledge accessible.

AI Accountability Network

Washington, DCPulitzer Center: Working with journalists and newsrooms that represent the diversity of the communities affected by AI technologies, the Network seeks to address the knowledge imbalance on artificial intelligence that exists in the journalism industry and to create a multidisciplinary and collaborative ecosystem that enables journalists to report on this fast-evolving topic with skill, nuance, and impact.

ATLAS is a crowdsourced (map) repository of projects happening in cities involving the use of artificial intelligence systems.

AI Journalism Lab

CUNY Graduate CenterThis 3-month hybrid, tuition-free and highly participatory program invites journalists to explore generative AI through theory and practice, and add meaningfully to the conversation.

This year we assess the AI readiness of 193 governments across the world. We are also introducing an interactive map to make our data more accessible!

This self assessment tool is designed to help policymakers in government understand how prepared their government is to use AI in a trustworthy way in the public sector.

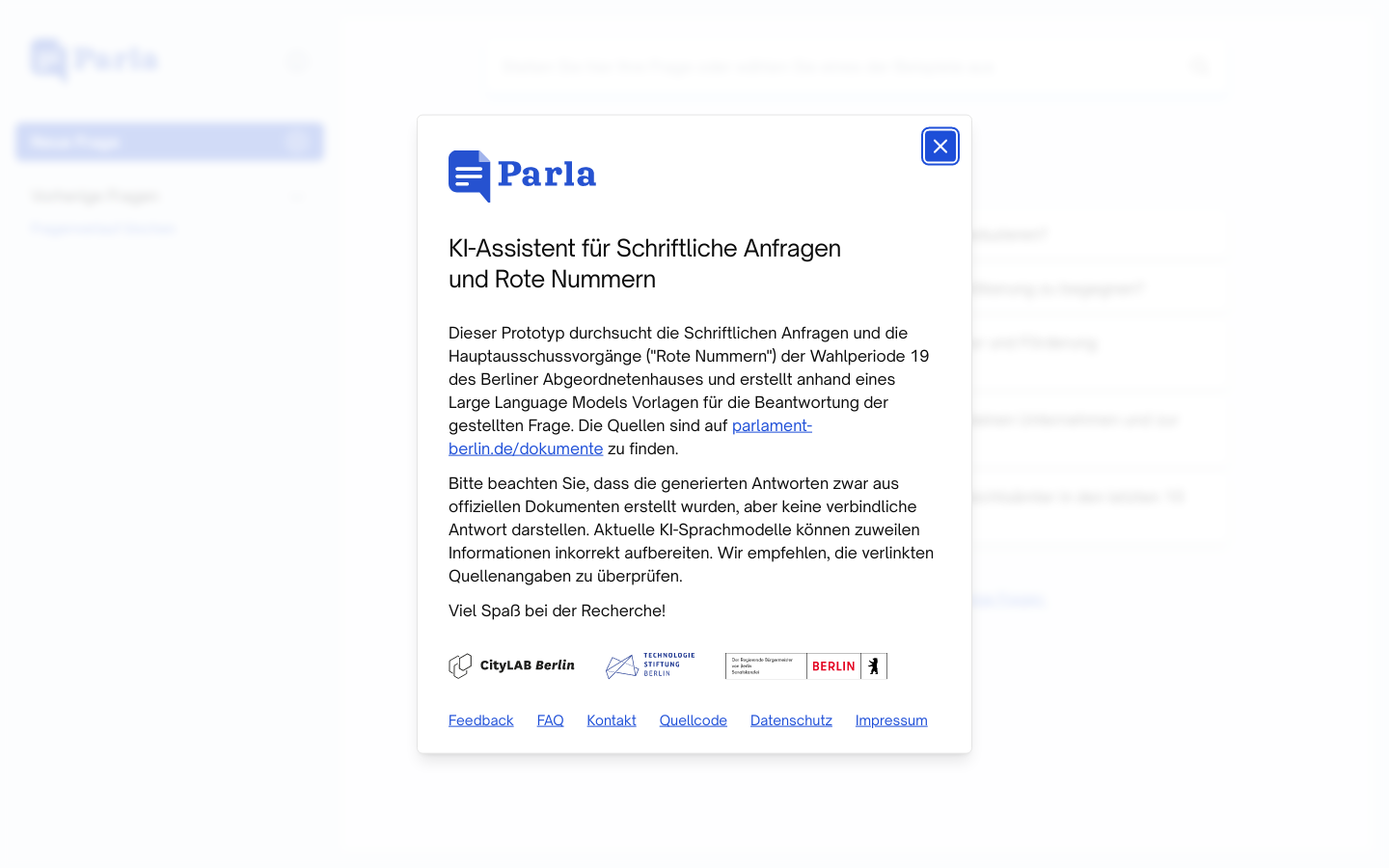

Parla

BerlinDieser Prototyp durchsucht die Schriftlichen Anfragen und die Hauptausschussvorgänge ("Rote Nummern") der Wahlperiode 19 des Berliner Abgeordnetenhauses und erstellt anhand eines Large Language Models Vorlagen für die Beantwortung der gestellten Frage.

OpenSky

Ireland (Éire)Delivering results for Enterprise & Government for 20+ years - through Data, Process Automation and AI expertise, driven by continuous innovation.

Greyparrot

LondonUnlock the power of AI waste analytics

CrossTech

United Kingdom of Great Britain and Northern Ireland (the)Develops a suite of AI tools for automated inspection systems for railways and mass transit optimization

Anonimometro

RomeAlgorithmic tool by the Italian government to fight tax evasion by cross-checking self-reported income with assets and other databases in a privacy-protecting approach

Prolific

LondonEasily find vetted research participants and AI taskers at scale

chatbot.team

GurugramChatbot.team is a conversational messaging platform that offers chatbot development services for businesses of all sizes.

Innovatiana

Paris, FranceInnovatiana is an Impact Tech startup specialized in Data Labeling for AI.

AI for Institutions

United Kingdom of Great Britain and Northern Ireland (the)The question we seek to address is: can we use AI itself to help us design and build better institutions, which enhance human capabilities for collaborating, governing, and living together?

Cooperative AI Foundation

United Kingdom of Great Britain and Northern Ireland (the)CAIF's mission is to support research that will improve the cooperative intelligence of advanced AI for the benefit of all.

The AI Assembly is the first national deliberative event on AI in the United States and represents a significant step toward understanding public sentiment on AI-related issues and shaping the conversation around the role of AI systems in society, with a focus on transparency, accountability, and responsible use.

Civox Ashley

LondonTransform your political campaign or movement with AI-powered two-way voice calls that transform your voter contact.